This week’s guest blogpost is from Frederike Dümbgen presenting her latest work from her PhD project at the Laboratory of Audiovisual Communications (LCAV), EPFL, and is currently a Postdoc at the University of Toronto. Enjoy!

Bats navigate using sound. As a matter of fact, the ears of a bat are so much better developed than their eyes that bats cope better with being blindfolded than they cope with their ears being covered. It was precisely this experiment that helped the discovery of echolocation, which is the principle bats use to navigate [1]. Broadly speaking, in echolocation, bats emit ultrasonic chirps and listen for their echos to perceive their surroundings. Since its discovery in the 18th century, astonishing facts about this navigation system have been revealed — for instance, bats vary chirps depending on the task at hand: a chirp that’s good for locating prey might not be good for detecting obstacles and vice versa [2]. Depending on the characteristics of their reflected echos, bats can even classify certain objects — this ability helps them find, for instance, water sources [3]. Wouldn’t it be amazing to harvest these findings in building novel navigation systems for autonomous agents such as drones or cars?

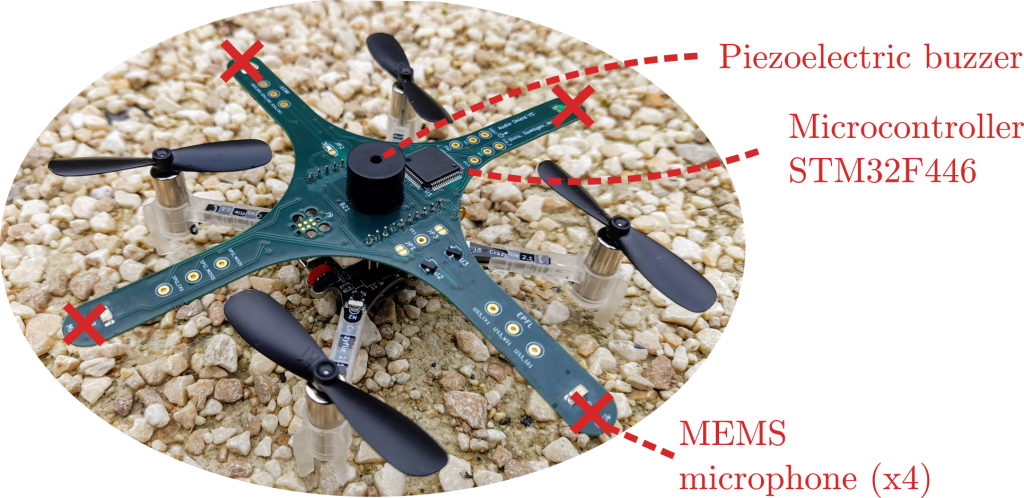

The quest for the answer to this question led us — a group of researchers from the École Polytechnique Fédérale de Lausanne (EPFL) — to design the first audio extension deck for the Crazyflie drone, effectively turning it into a “Crazybat” (Figure 1). The Crazybat has four microphones, a simple piezo buzzer, and an additional microprocessor used to extract relevant information from audio data, to be sent to the main processor. All of these additional capabilities are provided by the audio extension deck, for which both the firmware and hardware design files are openly available.1

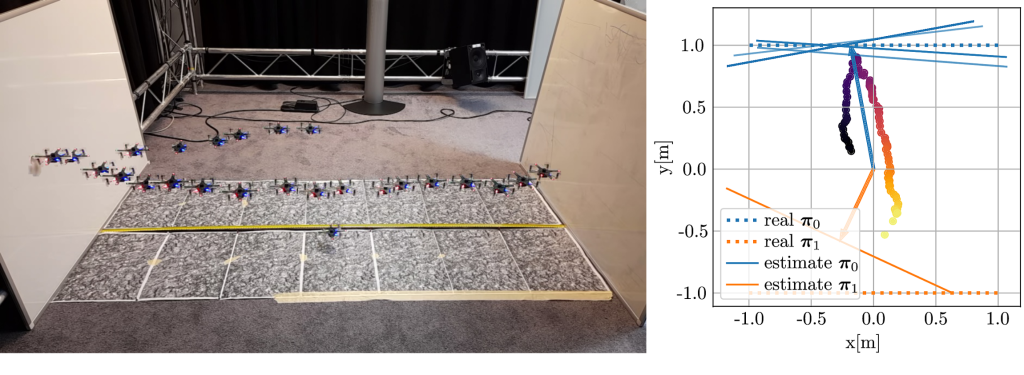

In our paper on the system [4], we show how to use chirps to detect nearby obstacles such as glass walls. Difficult to detect using a laser or cameras, glass walls are excellent sound reflectors and thus a good candidate for audio-based navigation. We show in a first semi-static feasibility study that we can locate the glass wall with centimeter accuracy, even in the presence of loud propeller noise (Video 1). When moving to a flying drone and different kinds of reflectors, the problem becomes significantly more challenging: motion jitter, varying propeller noise and tight real-time constraints make the problem much harder to solve. Nevertheless, first experiments suggest that sound-based wall detection and avoidance is possible (Figure and Video 2).

The principle we use to make this work is sound-based interference. The sound will “bounce off” the wall, and the reflected and direct sound will interfere either constructively or destructively, depending on the frequency and distance to the wall. Using this same principle for the four microphones, both the angle and the distance of the closest wall can be estimated. This is however not the only way to navigate using sound; in fact, our software stack, available as an open-source package for ROS2, also allows the Crazybat to extract the phase differences of incoming sound at the four microphones, which can be used to determine the location of an external sound source. We believe that a truly intelligent Crazybat would be able to switch between different operating modes depending on the conditions, just like bats that change their chirps depending on the task at hand.

Note that the ROS2 software stack is not limited to the Crazybat only — we have isolated the hardware-dependent components so that the audio-based navigation algorithms can be ported to any platform. As an example, we include results on the small wheeled e-puck2 robot in [4], which shows better performance than the Crazybat thanks to the absence of propeller noise and motion jitter.

This research project has taught us many things, above all an even greater admiration for the abilities of bats! Dealing with sound is pretty hard and very different from other prevalent sensing modalities such as cameras or lasers. Nevertheless, we believe it is an interesting alternative for scenarios with poor eyesight, limited computing power or memory. We hope that other researchers will join us in the quest of exploiting audio for navigation, and we hope that the tools that we make publicly available — both the hardware and software stack — lower the entry barrier for new researchers.

1 The audio extension deck works in a “plug-and-play” fashion like all other extension decks of the Crazyflie. It has been tested in combination with the flow deck, for stable flight in the absence of a more advanced localization system. The deck performs frequency analysis on incoming raw audio data from the 4 microphones, and sends the relevant information over to the Crazyflie drone where it is converted to the CRTP protocol on a custom driver and sent to the base station for further processing in the ROS2 stack.

References

[1] Galambos, Robert. “The Avoidance of Obstacles by Flying Bats: Spallanzani’s Ideas (1794) and Later Theories.” Isis 34, no. 2 (1942): 132–40. https://doi.org/10.1086/347764.

[2] Fenton, M. Brock, Alan D. Grinnell, Arthur N. Popper, and Richard R. Fay, eds. “Bat Bioacoustics.” In Springer Handbook of Auditory Research, 1992. https://doi.org/10.1007/978-1-4939-3527-7.

[3] Greif, Stefan, and Björn M Siemers. “Innate Recognition of Water Bodies in Echolocating Bats.” Nature Communications 1, no. 106 (2010): 1–6. https://doi.org/10.1038/ncomms1110.

[4] F. Dümbgen, A. Hoffet, M. Kolundžija, A. Scholefield and M. Vetterli, “Blind as a Bat: Audible Echolocation on Small Robots,” in IEEE Robotics and Automation Letters (Early Access), 2022. https://doi.org/10.1109/LRA.2022.3194669.