At Bitcraze, we spend a lot of time making things fly. Even though flying robots are, and will always be, fascinating to watch , every now and then it’s refreshing to try something different. Last Friday, I started exploring an idea that has been lying around for a while now: having a ground robot with the same Crazyflie infrastructure – the radio communication, the deck ecosystem and the cfclient connection. This robot is also known as the Crazyrat.

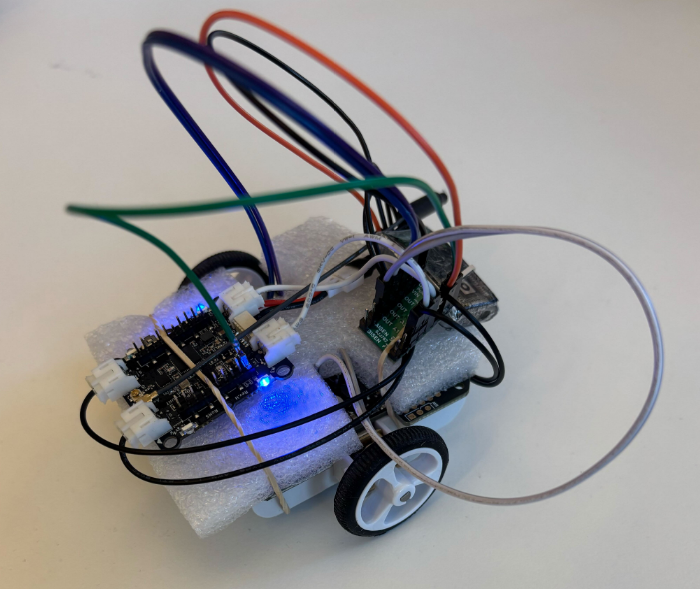

Fair warning: this is a first prototype, and it definitely looks like one. Jumper wires going in every direction, plastic foam and rubber bands to keep everything in place. But it drives, and it drives fast! That’s enough to call it a success and write about it.

Hardware

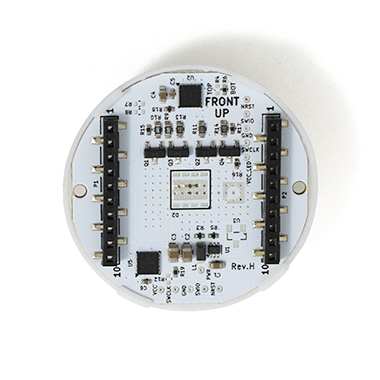

The entire vehicle was built around the Crazyflie Bolt 1.1, using its motor connectors, and specifically the S pins to drive a small H-bridge motor driver, enabling proper bidirectional DC motor control. To make it possible to drive the H-bridge, the motors must be configured as brushed.

For the motor driver, I used a Pololu DRV8833 Dual Motor Driver Carrier. I experimented with two different motor sets: one with a 75:1 gear ratio and one with 15:1. Even though the 75:1 motors offered better precision and were easier to drive, the 15:1 setup was the clear winner due to their speed.

The motors, the chassis and the power supply were taken from a Pololu 3pi+ robot.

Firmware

For the firmware, I used the Out-Of-Tree functionality of the crazyflie-firmware which makes it easy to integrate custom controllers. The controller itself is relatively simple. It maps pitch and yaw commands sent from the cfclient to throttle and steering respectively. Then, it sends the calculated values directly to the motors as PWM signals.

float throttle = -(setpoint->attitude.pitch / maxPitch);

float steer = -(setpoint->attitudeRate.yaw / maxYaw) * steerGain;

float left = throttle - steer;

float right = throttle + steer;

control->controlMode = controlModePWM;

control->normalizedForces[0] = (left > 0.0f) ? left : 0.0f; /* M1 AIN1 left fwd */

control->normalizedForces[1] = (left < 0.0f) ? -left : 0.0f; /* M2 AIN2 left rev */

control->normalizedForces[2] = (right > 0.0f) ? right : 0.0f; /* M3 BIN1 right fwd */

control->normalizedForces[3] = (right < 0.0f) ? -right : 0.0f; /* M4 BIN2 right rev */Code language: PHP (php)The Crazyrat in action

To test the prototype, I used a gamepad and drove it through the cfclient – no modifications are required. Here’s a video showing the capabilities of the Crazyrat using the 15:1 motor setup.

What’s Next

This project was a really fun learning experience on the potential and the limitations of the ground robots. These are some of the directions I plan to explore in the future:

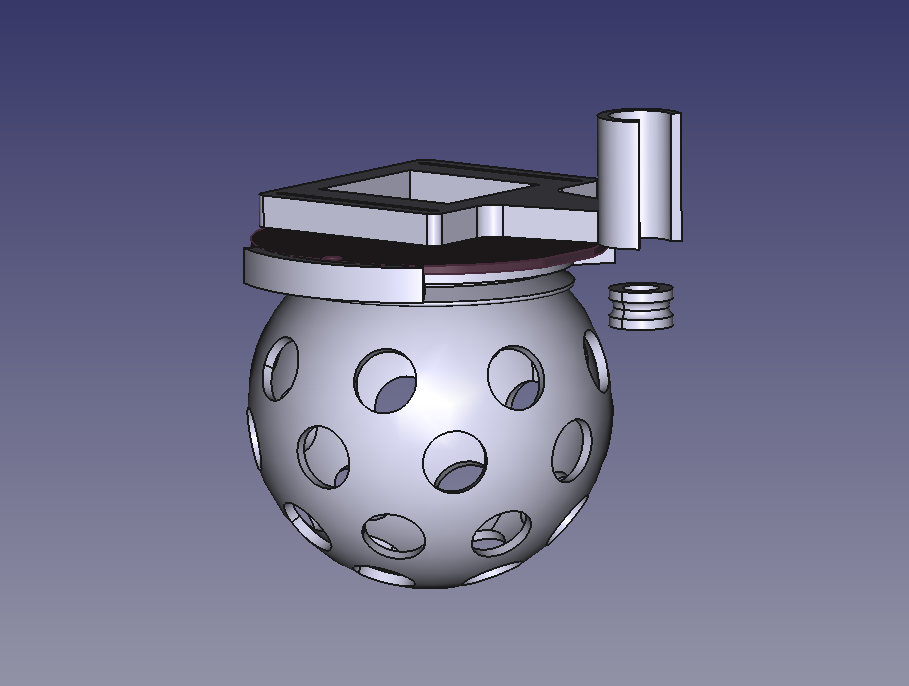

- Robust design – Design a proper chassis, clean up the wiring and make the whole vehicle smaller, to fit better in the Crazyflie ecosystem.

- Deck integration – Use either the Lighthouse or the LPS deck for positioning and the Multi-Ranger deck for obstacle detection.

- Experiment – Explore heterogeneous multi-agent swarming scenarios with the Crazyrat and the Crazyflie.