Today, we rejoin with Maurice Zemp who presented his work in an earlier blogpost.

Road to the Finals

I had officially completed my Matura thesis in October 2024 and submitted it to the Schweizer Jugend forscht competition. When I was selected for the semifinals, I was given the chance to present my work in front of a jury. Their feedback was highly constructive and came with clear requirements: for the finals, I would need to provide more in-depth analyses of the individual system components of my project. At first, this felt like a challenge, but in the process I realized how much these refinements elevated my research. By the time the finals approached, I felt both nervous and proud, knowing that the work I would present had grown far beyond the version I had initially submitted. On April 24, 2025, the big moment finally arrived – the start of the national finals.

Day 1

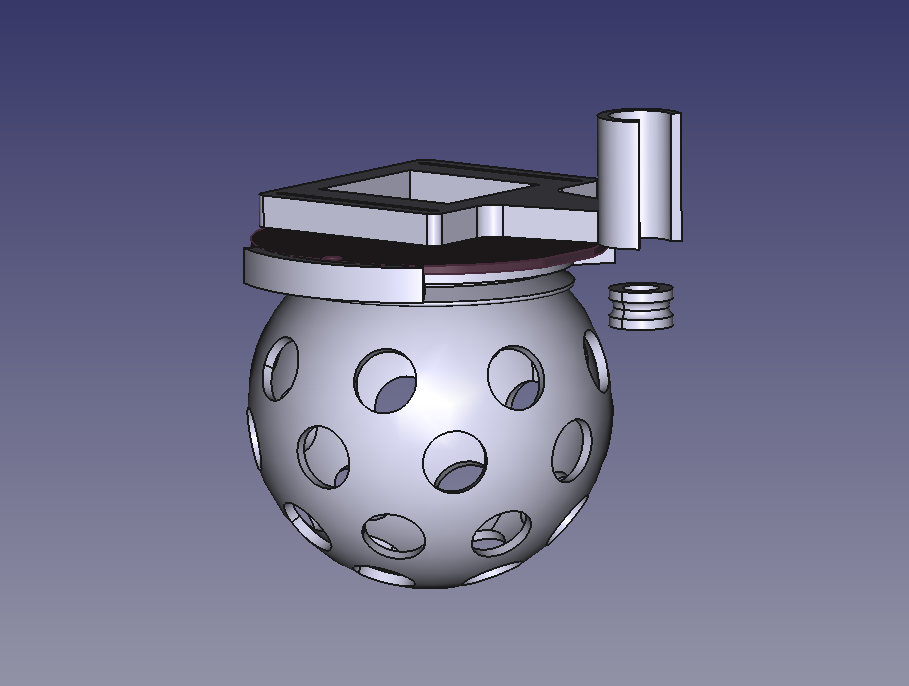

The day began with my journey to ETH Zurich. Traveling by public transport, I carried my Crazyflie drone and the racing gate with me – equipment that had accompanied me throughout countless hours of development and testing and with which I wanted to make the comprehension of my project a bit more feasible. Arriving at ETH, I was greeted warmly at the reception, where I first felt a sense of belonging among dozens of passionate and curious young scientists.

The morning was dedicated to setting up our booths. Piece by piece, the exhibition hall transformed into a vibrant space filled with prototypes, posters, and creative ideas. Once my own stand was ready, I finally had a moment to take in the atmosphere and to start the first conversations. In the afternoon, we were treated to a guided city tour through Zurich. Walking through the old streets, hearing stories about the city, and enjoying the fresh air was the perfect opportunity to get to know the other participants better.

Later that day, alumni of Schweizer Jugend forscht visited the exhibition. For the first time, I had the chance to present my project outside of the jury context, and I was surprised by the interest and thoughtful questions I received.

By the time we arrived at our youth hostel late in the evening, the excitement of the day had fully caught up with me. Exhausted but exhilarated, I fell into bed.

Day 2

The second day began with breakfast at the youth hostel, followed by a short tram ride back to ETH Zurich. The morning program was dedicated to the jury sessions, which represented one of the most important parts of the entire competition. Unlike in the semifinals, where I just explained my project and was asked some general questions, this time I was able to discuss my project in detail with several experts – including those specializing in fields beyond my own topic.

These conversations quickly turned into fascinating discussions. The jurors asked insightful questions, challenged certain assumptions, and encouraged me to think more deeply about the potential applications of my work. At the same time, I received a great deal of praise, which was both reassuring and motivating. It was incredibly rewarding to see that months of effort, refinement, and problem-solving were being recognized by experienced professionals.

In the afternoon, the doors of ETH opened to the public for the exhibition. Friends, family members, and curious visitors from outside the competition came to explore the stands. Presenting my project in this setting felt very different from the formal jury discussions of the morning – it was more relaxed, conversational, and filled with spontaneous questions. I especially enjoyed seeing how people unfamiliar with drone technology reacted to the project, and it gave me the chance to practice explaining complex ideas in a way that was accessible to everyone. After such a full day of interactions, we returned to the youth hostel in the evening. The atmosphere there was much calmer, as everyone tried to recharge a little energy in preparation for the final day.

Day 3

The final day once again began with our journey to ETH Zurich. In the morning, the exhibition hall opened its doors for a second round of public visits. This time, the experience was especially meaningful for me, as my family came to see my project in person.

After lunch, it was finally time for the highlight of the competition: the award ceremony. A live band set the stage, and soon the opening speeches began. The tension in the room was almost tangible – every participant knew that months, if not years, of work were culminating in this single event. I felt both nervous and excited, my heart beating faster with each passing moment.

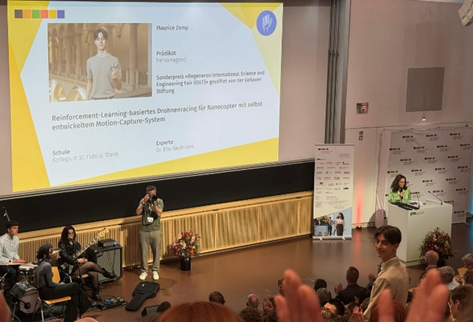

Then came an unexpected twist: even before the regular prizes and certificates were announced, the jury revealed the winners of the most prestigious special awards. To my immense joy, my name was called. I had been selected to represent Switzerland at the International Science and Engineering Fair (ISEF) 2026 in Phoenix, Arizona. The sense of relief, excitement, and pride I felt in that moment is difficult to describe – it was a dream come true.

The ceremony continued with an inspiring keynote by former NASA Director Thomas Zurbuchen, who shared his journey in science and reminded us of the importance of perseverance and never giving up.

Finally, the time came for the official certificates. One by one, every participant was called to receive their recognition. When my turn came, I was awarded the highest possible distinction: hervorragend (outstanding) honored with CHF 1500. The applause and congratulations that followed made the moment even more unforgettable.

The evening concluded with an apéro, where I had the chance to exchange thoughts with professors, fellow participants, and many guests. I was overwhelmed by the warm words of encouragement and congratulations I received for both my project and the recognition it had achieved.

After three exciting, inspiring, and at times exhausting days, it was finally time to return home – this time together with my parents, carrying not only my luggage but also an experience that I will cherish for a lifetime.