When people think of Urban Air Mobility (UAM), they might think of large, futuristic eVTOL aircraft carrying people between skyscrapers, not a 30-g nano-quadrotor fitting in the palm of their hand. However, testing full-scale aircraft in chaotic urban wind fields is dangerous, expensive, and practically impossible. During my PhD thesis, I found that the brushless Crazyflie makes an ideal subscale proxy to rigorously explore flight in these complex microclimates.

Urban microclimates are notoriously unpredictable and dangerous for aircraft. High-intensity wind gusts and spatial gradients are formed by multi-scale interactions between the atmospheric boundary layer and the buildings, bridges, and other urban infrastructure that define our skylines. There are entire fields dedicated to studying urban wind patterns using supercomputers and precise wind tunnel testing, but how can drones leverage all this data and modeling in real time?

Put another way, if we want package delivery drones and electric air taxis safely operating in urban airspaces, we need innovative ways for aircraft to predict hazardous wind conditions and adapt to them on the fly. Our initial research showed that drones could predict surrounding time-averaged urban winds with reasonable accuracy using LiDAR, and our follow up work suggests that incorporating these local wind predictions into navigation could reduce potential crashes and even improve energy efficiency.

This article breaks down the hardware details for experiments during the final six months of my thesis, where the brushless Crazyflie played a pivotal role in validating concepts from my thesis in the real world.

A Subscale UAM Testbed

A lot of research related to drone wind estimation is limited by the lack of ground truth data available to compare against. With my research, I really wanted to use a highly instrumented laboratory environment, where we could tightly control the experimental conditions and get accurate, repeatable trials without being at the mercy of the weather.

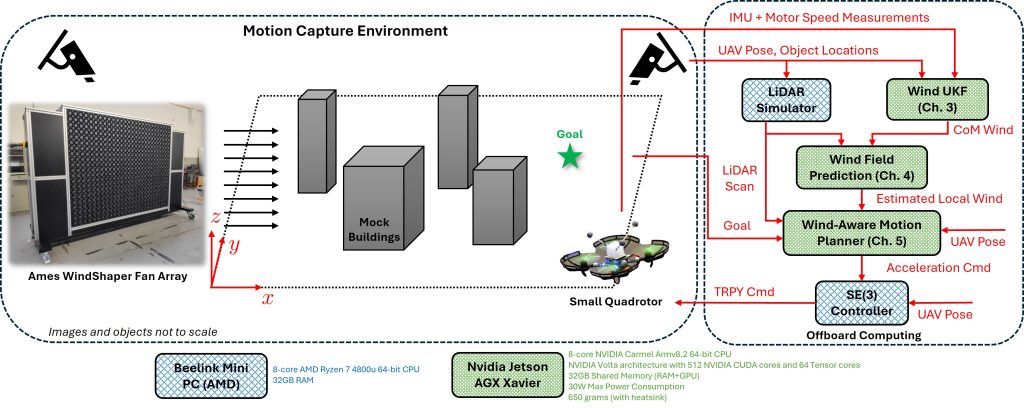

To accomplish that, we used the WindShaper facility at NASA Ames Research Center in Mountain View, CA, a state-of-the-art open-air wind tunnel (the “WindShaper”) positioned inside a basketball court-sized motion capture space. We mimicked “urban winds” using wooden boxes meant to represent buildings.

The problem was that the WindShaper was only so big. We needed a similarly scaled drone that could represent our eVTOL. That’s where the Crazyflie came in!

The (Modded) Crazyflie

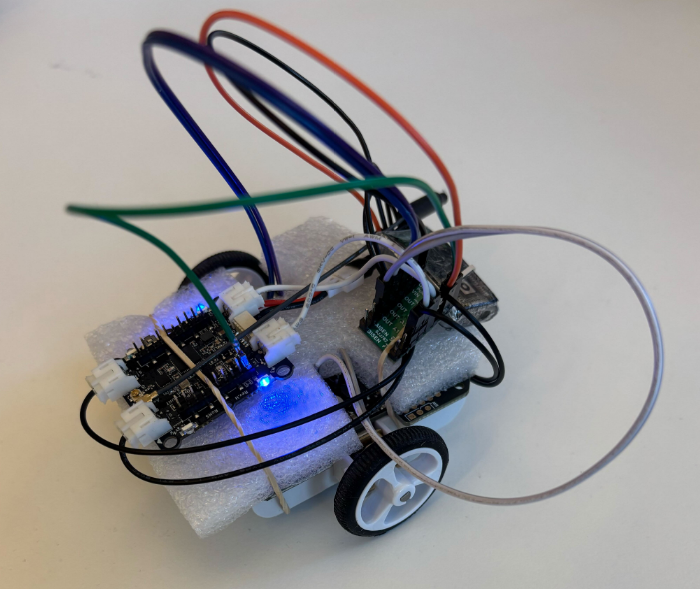

For multiple reasons, all relating to dynamic similitude, the Crazyflie ended up being the perfect size for our experiments. The timing was perfect too because the brushless Crazyflie had just been released, offering a similar footprint as the original Crazyflie, but with the additional thrust and battery life necessary to carry our payloads and handle gusty wind conditions.

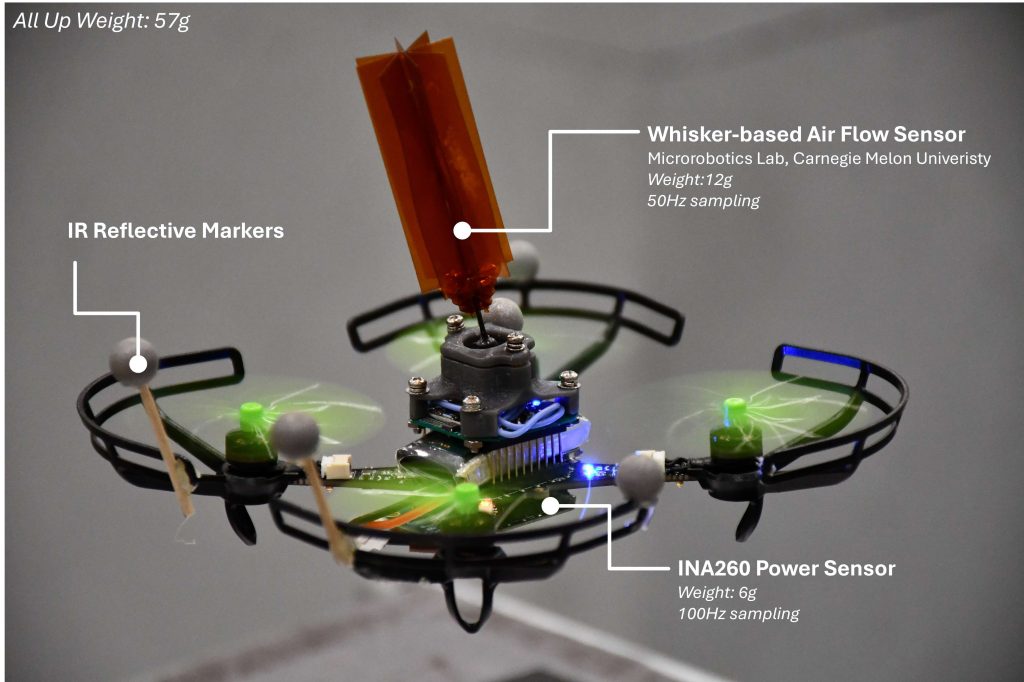

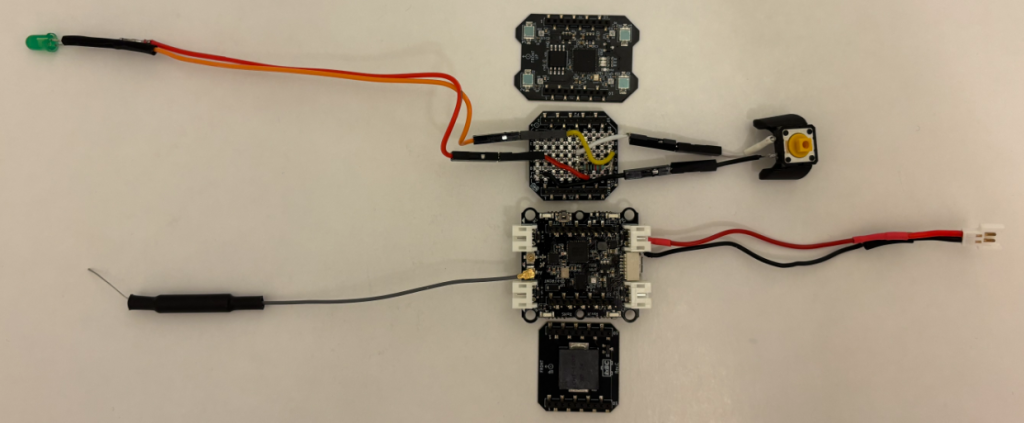

We modified the Crazyflie with two additional sensors. The first was an INA260 power sensor that we used for… well, measuring the power draw from the battery! The second sensor was a bit more interesting. In partnership with the Microrobotics Lab at Carnegie Mellon University, we put a whisker-based air flow sensor on the Crazyflie to measure the wind. You can read more about that neat sensor here.

The all up weight, including the battery, power sensor, whisker, and motion capture markers was 57g, almost double the standard weight. With a 350mAh battery we could get about 3 to 5 minutes of flight, depending on the wind speed, which was just enough time for us to run our experiments.

Actuator, Aerodynamic, and Power System Identification

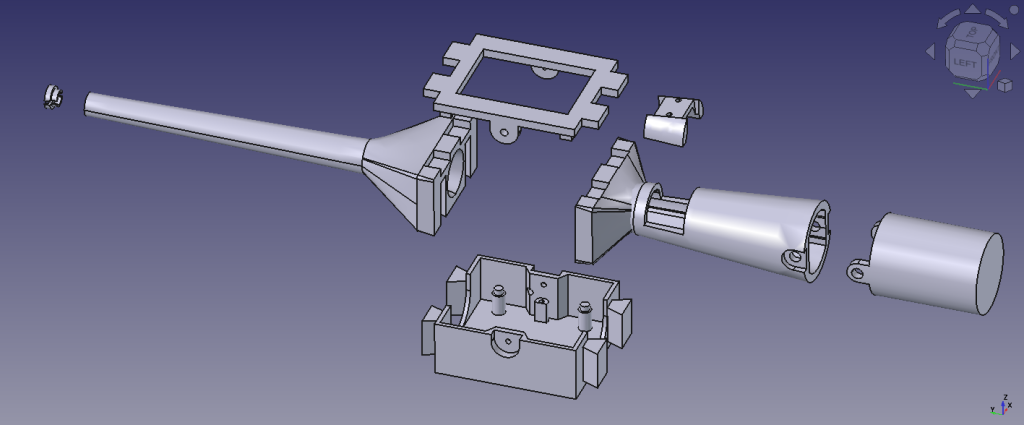

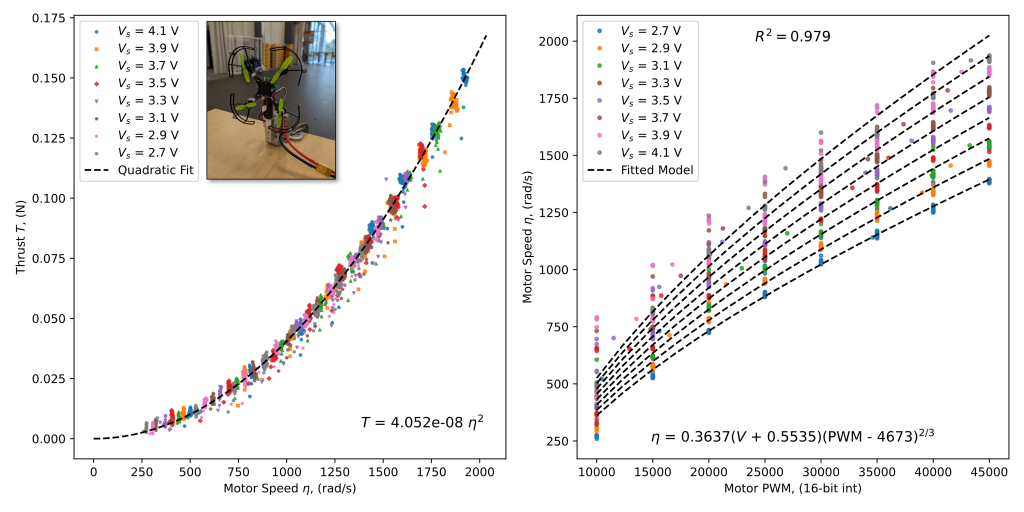

I did two experiments to characterize the brushless Crazyflie. The first experiment involved strapping the Crazyflie onto a thrust stand with an optical RPM sensor to model the relationships between thrust, speed, commanded PWM signal, and battery supply voltage. The last three signals were relevant because at the time the firmware didn’t support direct motor speed measurements!

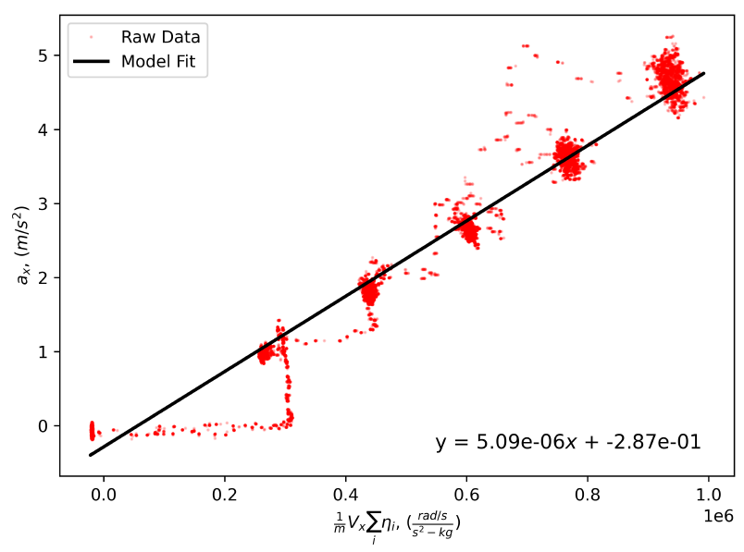

The second experiment involved subjecting the Crazyflie to wind speeds varying from 0 m/s up to 8 m/s. For these experiments, I used the onboard accelerometer to measure the net drag force, which required being able to subtract the static thrust from the propeller, hence the previous experiment; the INA260 sensor to measure power draw from the battery; and a combination of motion capture and a pitot-static probe on a tripod to measure the drone’s ground speed and wind speed, respectively.

The plot above shows a surprisingly linear relationship between the acceleration and the sum of airspeed and motor speeds for the x body axis. This is known as rotor drag, and it’s generally considered the dominant form of drag for quadrotors at lower airspeeds. The slope of this line is the rotor drag coefficient. What this model lets us do is infer the airspeed along the x axis from the accelerometer and motor speeds alone, no dedicated wind sensor necessary!

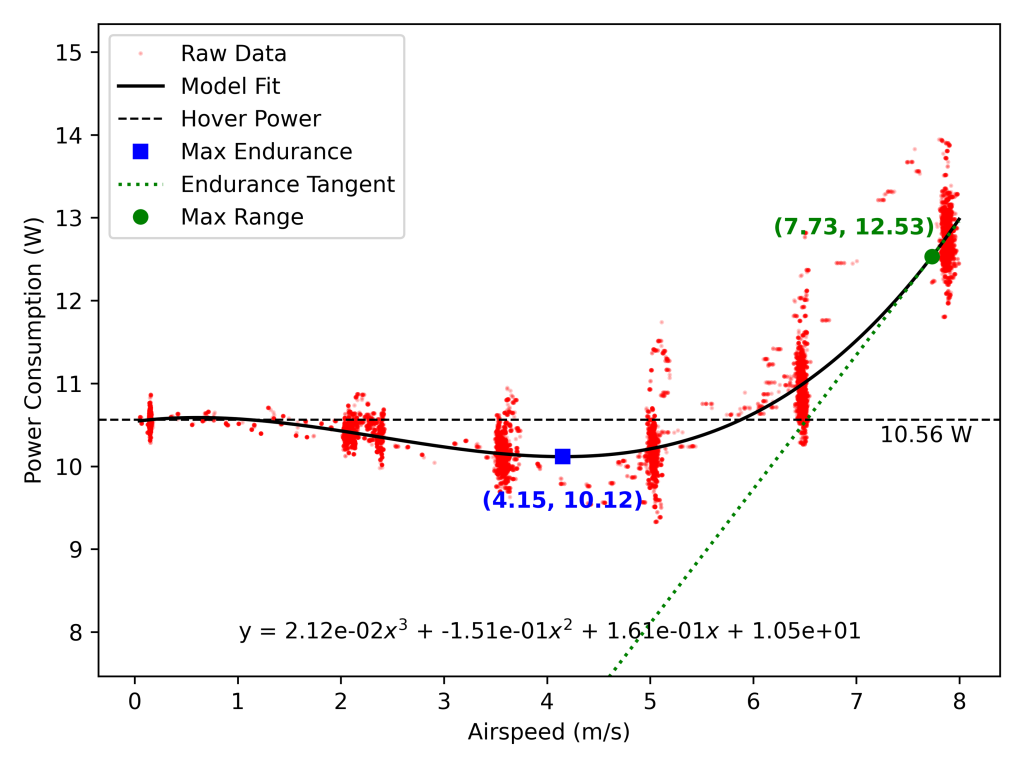

Lastly, I used the same experiment to model the power curve for the Crazyflie. What we were able to show was that there is a measurable dip in the average power consumed by the brushless Crazyflie at steady level forward flight. This effect is well-documented in full-scale helicopters, where the translational lift effect makes the rotors more efficient in forward flight, but it is rarely captured or documented on a scale as micro as the Crazyflie. To the best of my knowledge, this is one of the first empirical confirmations of any sort of power dip on a nano-UAV, which may (or may not!) have relevance as we think more about taking these tiny drones out into the wild.

My measurements indicate an average power consumption of 10.56 Watts in hover, placing the hover efficiency at around 0.188 W/g. Keep in mind this is for the modded Crazyflie weighing in at 57g.

Putting It All Together

The ultimate goal with all these experiments was to test out my methods for wind prediction & estimation and wind/obstacle-aware motion planning in real time. We tested these algorithms through multiple trials across five “building” configurations, totaling over an hour of actual flight time. Below is a video showcasing the wind field prediction happening in real time.

In this trial, the Crazyflie is trying to track the XY location of the end of the wand while also avoiding the obstacles using (simulated) LiDAR scans. Meanwhile the WindShaper is throwing wind at about 4 m/s at the drone. This particular trial was focused more on the wind prediction side of things rather than motion planning, so the motions aren’t particularly exciting. Nevertheless, the network was capturing salient flow features like the high speed wind tunnel effect between the building clusters.

Closing Thoughts

Building this testbed proved that nano-quadrotors like the Crazyflie are far more than just educational toys or swarm demonstrators. When properly characterized, their tight physical scaling parameters make them uniquely qualified as safe, high-fidelity proxies for validating the next generation of Urban Air Mobility algorithms.

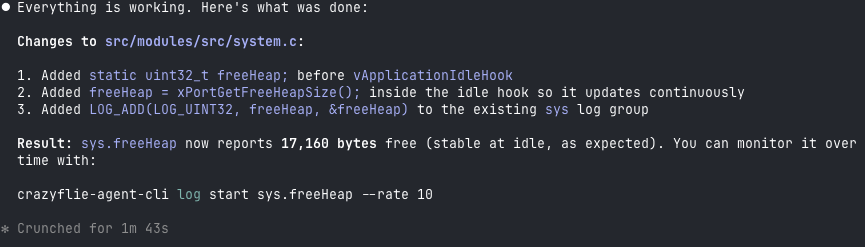

None of this would have been viable without the open-source architecture of the Bitcraze ecosystem. Being able to easily modify the firmware to interface with our custom INA260 power monitor, log high-frequency accelerometer measurements, and dynamically communicate with external motion capture and WindShaper using ROS allowed us to treat the Crazyflie as a true subscale UAM development kit.

If you want to dive deeper into anything you found interesting in this article, or better yet get access to the data I collected, you can find more details in my full dissertation (available on arXiv) or reach out to me on LinkedIn!