It’s now become a tradition to create a video compilation showcasing the most visually stunning research projects that feature the Crazyflie. Since our last update, so many incredible things have happened that we felt it was high time to share a fresh collection.

As always, the toughest part of creating these videos is selecting which projects to highlight. There are so many fantastic Crazyflie videos out there that if we included them all, the final compilation would last for hours! If you’re interested, you can find a more extensive list of our products used in research here.

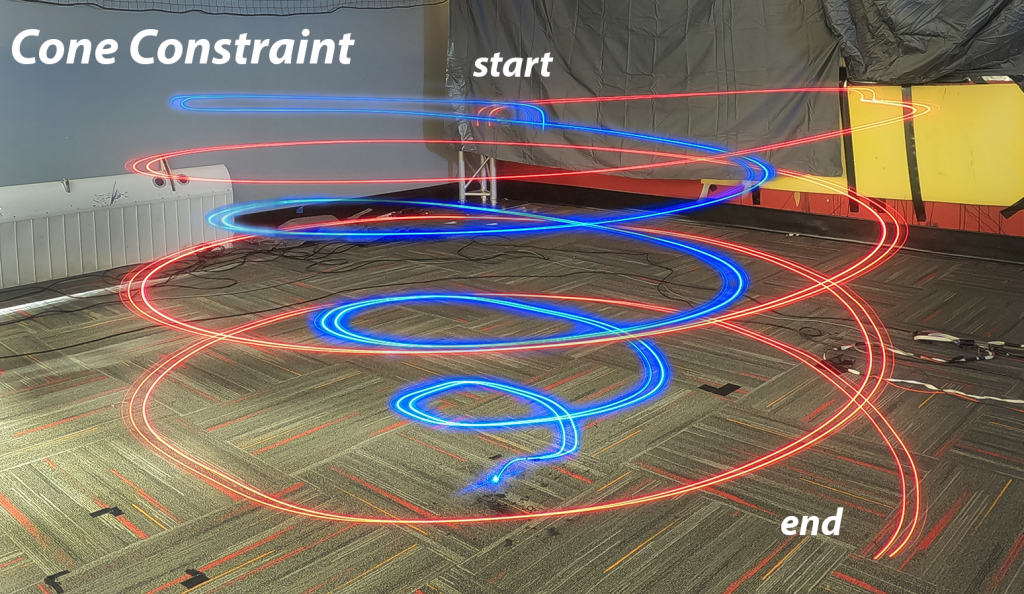

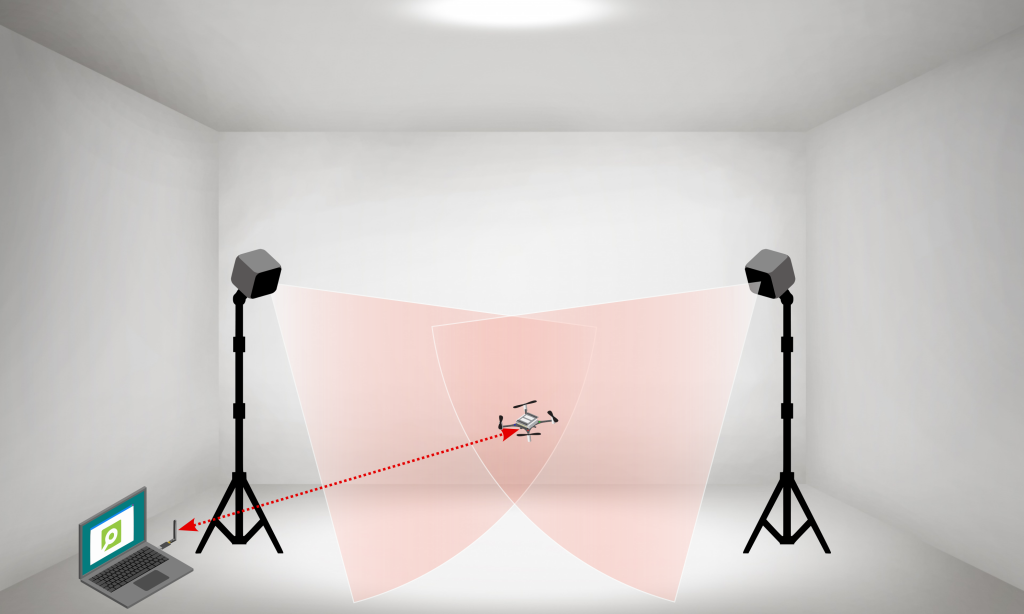

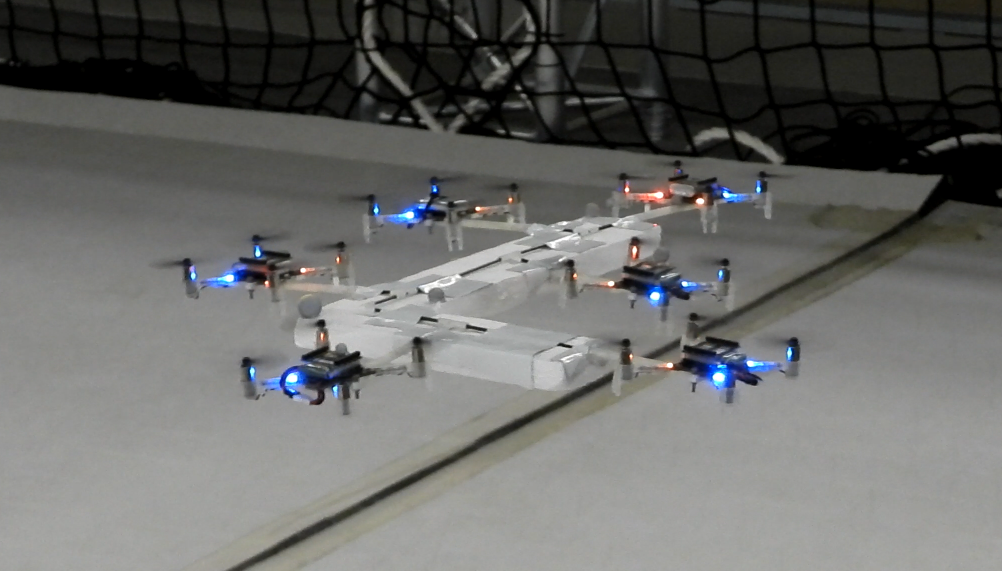

The video covers 2023 and 2024 so far. We were once again amazed by the incredible things the community has accomplished with the Crazyflie. In the selection, you can see the broad range of research subjects the Crazyflie can be a part of. It has been used in mapping, or swarms – even in heterogeneous swarms! With its small size, it has also been picked for human-robot interaction projects (including our very own Joseph La Delfa showcasing his work). And it’s even been turned into a hopping quadcopter!

Here is a list of all the research that has been included in the video:

- An agile monopedal hopping quadcopter with synergistic hybrid locomotion

Songnan Bai, Qiqi Pan, Runze Ding, Huaiyuan Jia, Zhengbao Yang, Pakpong Chirarattananon

City University of Hong Kong, Massachusetts Institute of Technology - Articulating Mechanical Sympathy for Somaesthetic Human-Machine Relations

Joseph La Delfa & Rachael Garrett & Airi Lampinen & Kristina Höök

KTH - Safe Operations of an Aerial Swarm via a Cobot Human Swarm interface

Derek A. Paley, Sydrak S. Abdi

University of Maryland - db-CBS: Discontinuity-Bounded Conflict-Based Search for Multi-Robot Kinodynamic Motion Planning Akmaral Moldagalieva, Joaquim Ortiz-Haro, Marc Toussaint, Wolfgang Hönig

Technical University of Berlin - Training on the Fly: On-device Self-supervised Learning aboard Nano-drones within 20mW

Elia Cereda, Alessandro Giusti, and Daniele Palossi

UPI/ SUPSI IDSIA, ETH Zurich - Learning Decentralized Flocking Controllers with Spatio-Temporal Graph Neural Network

Siji Chen, Yanshen Sun, Peihan Li, Lifeng Zhou, Chang-Tien Lu

Virginia Tech, Drexel University - From Shadows to Light: A Swarm Robotics Approach With Onboard Control for Seeking Dynamic Sources in Constrained Environments

Tugay Alperen Karagüzel, Victor Retamal, Nicolas Cambier, & Eliseo Ferrante

Vrije Universiteit Amsterdam - Multi-Robot Target Tracking with Sensing and Communication Danger Zones

Jiazhen Liu, Peihan Li, Yuwei Wu, Gaurav S. Sukhatme, Vijay Kumar, Lifeng Zhou USC. Georgia Tech, University of Pennsylvania, Drexel University - NanoSLAM: Enabling Fully Onboard SLAM for Tiny Robots

Vlad Niculescu, Tommaso Polonelli, Michele Magno, Luca Benini ETH Zurich, PULP - Quadrolltor: A Reconfigurable Quadrotor with Controlled Rolling and Turning

Huaiyuan Jia, Runze Ding, Kaixu Dong, Songnan Bai, and Pakpong Chirarattananon

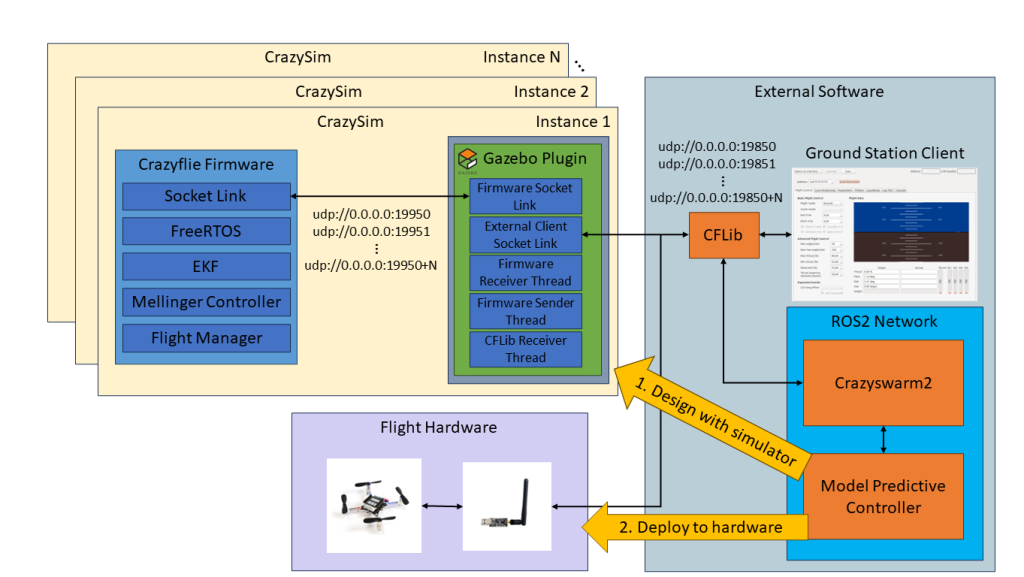

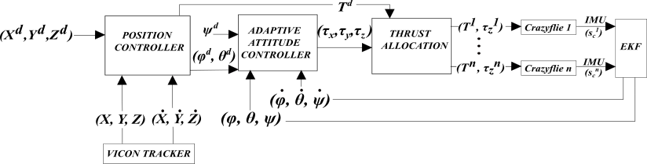

City University of Hong Kong - TinyMPC: Model-Predictive Control on Resource-Constrained Microcontrollers

Khai Nguyen, Sam Schoedel, Anoushka Alavilli, Brian Plancher & Zachary Manchester

Carnegie Mellon University - AMSwarmX: Safe Swarm Coordination in CompleX Environments via Implicit Non-Convex Decomposition of the Obstacle-Free Space

Vivek K. Adajania, SiQi Zhou, Arun Kumar Singh, & Angela P. Schoellig

University of Toronto, Vector Institute, Technical University of Munich, University of Tartu - Ultra-Lightweight Collaborative Mapping for Robot Swarms

Vlad Niculescu, Tommaso Polonelli, Michele Magno, Luca Benini

ETH Zurich - Energy efficient perching and takeoff of a miniature rotorcraft

Yi-Hsuan Hsiao, Songnan Bai, Yongsen Zhou, Huaiyuan Jia, Runze Ding, Yufeng Chen, Zuankai Wang, Pakpong Chirarattananon

City University of Hong Kong, Massachusetts Institute of Technology, The Hong Kong Polytechnic University

But enough talking, the best way to show you everything is to actually watch the video:

A huge thank you to all the researchers we reached out to and who agreed to showcase their work! We’re especially grateful for the incredible footage you shared with us—some of it was new to us, and it truly adds to the richness of the compilation. Your contributions help highlight the fantastic innovations happening within the Crazyflie community. Let’s hope the next compilation also shows projects with the Brushless!