As you may have noticed from the recent blog posts, we were very excited about ICRA London 2023! And it seems that we had every right to be, as this conference had the highest number of Crazyflie related papers compared to all the previous robotics conferences! In the past, the conferences typically had between 13-16 papers, but this time… BOOM! 28 papers! In this blog post, we will provide a list of these papers and give a general evaluation of the topics and themes covered so far.

So here some stats:

- ICRA had 1655 papers accepted (43 % acceptance rate)

- 28 Crazyflie papers (25 proceedings, 1 RA-L, 1RO-L, 1 late breaking result postor)

- Haven’t included the workshop papers this time (no time)

- The major topics we discovered were swarm coordination, safe trajectory planning, efficient autonomy, and onboard processing

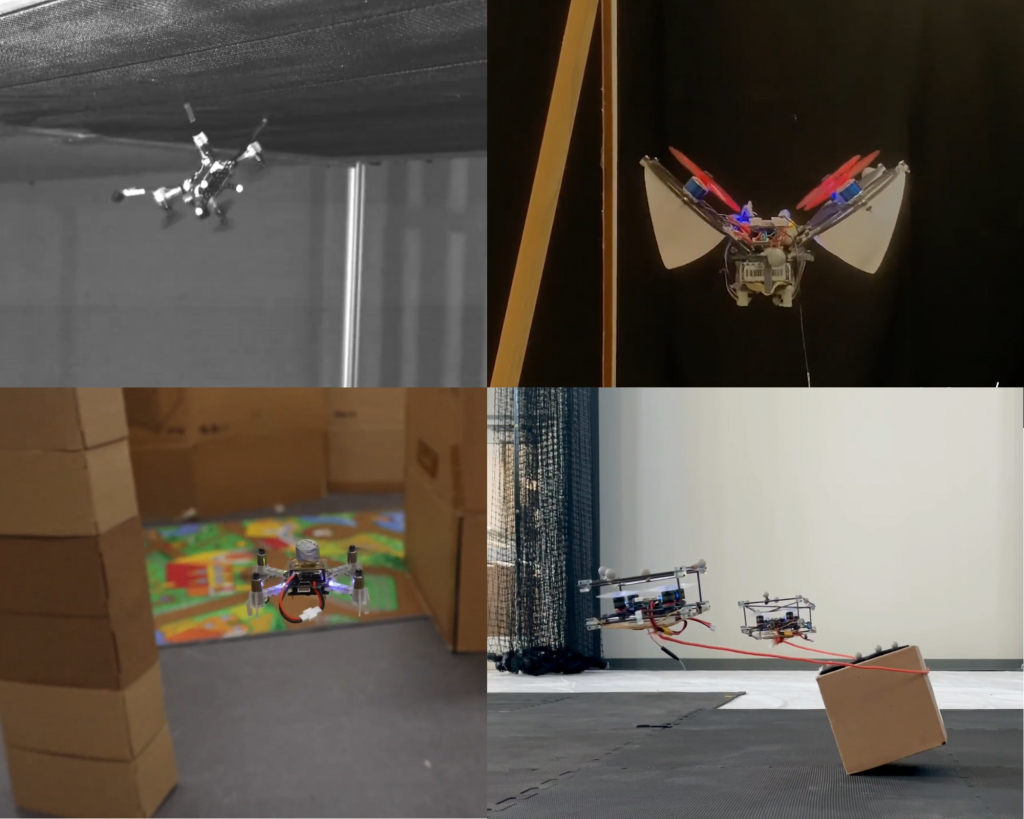

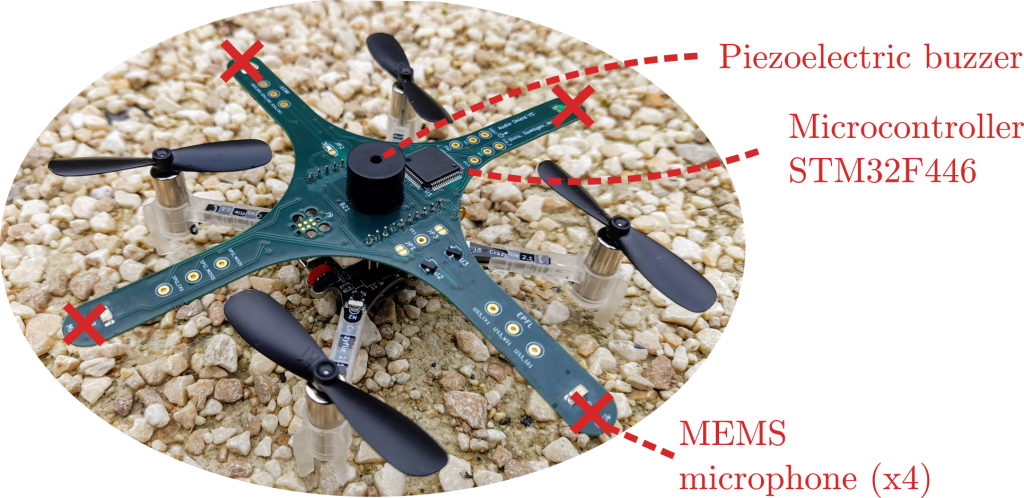

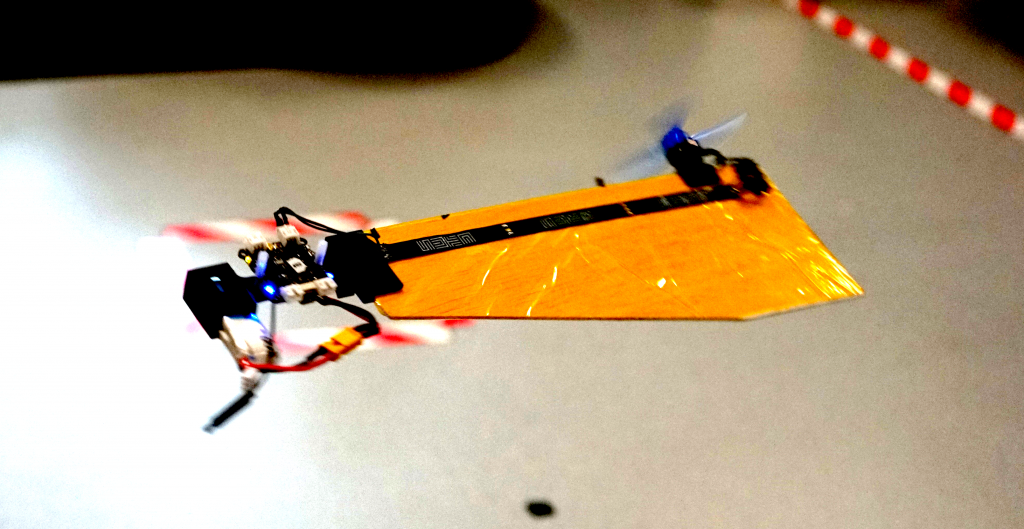

Additionally, we came across a few notable posters, including one about a grappling hook for the Crazyflie [26], a human suit that allows for drone control [5], the Bolt made into a monocopter with a Jetson companion [16], and a flexible fixed-wing platform driven by a barebone Crazyflie [1]. We also observed a growing interest in aerial robotics with approximately 10% of all sessions dedicated to UAVs. Interestingly, 18 out of the 28 Crazyflie papers were presented in non-UAV specialized sessions, such as multi-robot systems and vision-based navigation.

Swarm coordination

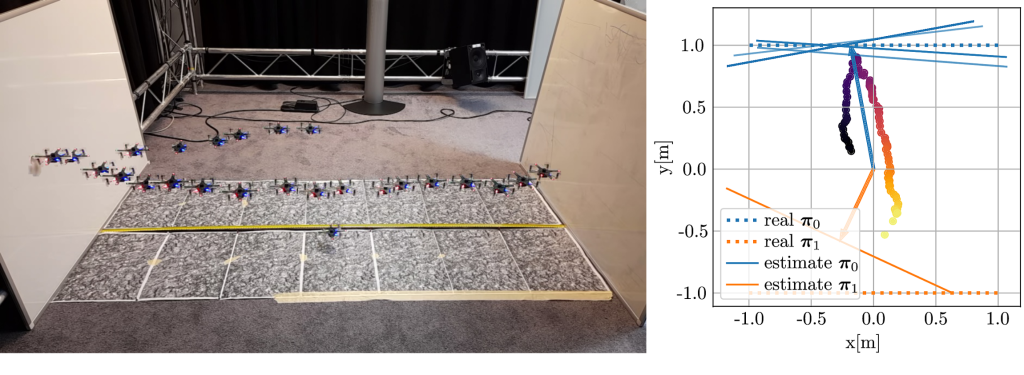

Swarms were a hot topic at ICRA 2023 as already noticed by this tweet of Ramon Roche. We had over 10 papers dedicated to this topic, including one that involved 16 Crazyflies [9]. Surprisingly, more than half of the papers utilized multiple Crazyflies. This already sets a different landscape compared to IROS 2022, where autonomous navigation took center stage.

In IROS 2022, we witnessed single-drone gas mapping using a Crazyflie, but now it has been replicated in the Webots simulation using 2 Crazyflies [23]. Does this imply that we might witness a 3D gas localizing swarm at IROS 2023? We can’t wait.

Furthermore, we came across a paper [11] featuring the Bolt-based platform, which demonstrated flying formations while being attached to another platform using a string. It presented an intriguing control problem. Additionally, there was a work that combined safe trajectory planning with swarm coordination, enabling the avoidance of obstacles and people [12]. Moreover, there were some notable collaborations, such as robot pickup and delivery involving the Turtlebot 3 Burger [22].

Given the abundance of swarm papers, it’s impossible for us to delve into each of them, but it’s all very impressive work.

Safe trajectory planning and AI-deck

Another significant buzzword at ICRA was “safety-critical control.” This is important to ensuring safe control from a human interface [15] and employing it to facilitate reinforcement learning [27]. The latter approach is considered less “safe” in terms of designing controllers, as evidenced by the previous IROS competition, the Safe Robot Learning Competition. Although the Crazyflie itself is quite safe, it makes sense to first experiment with safe trajectories on it before applying them to larger drones.

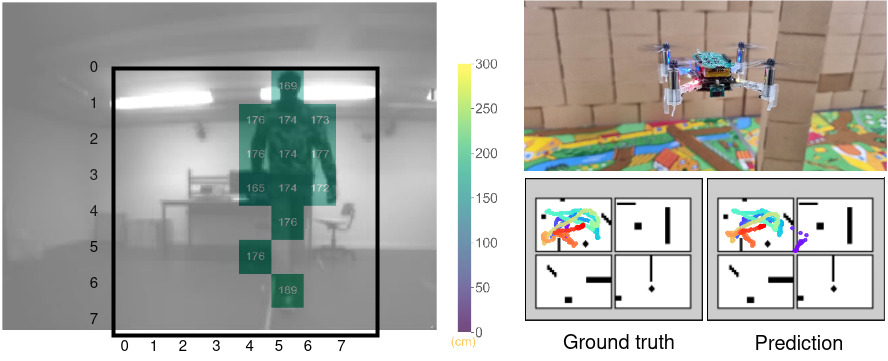

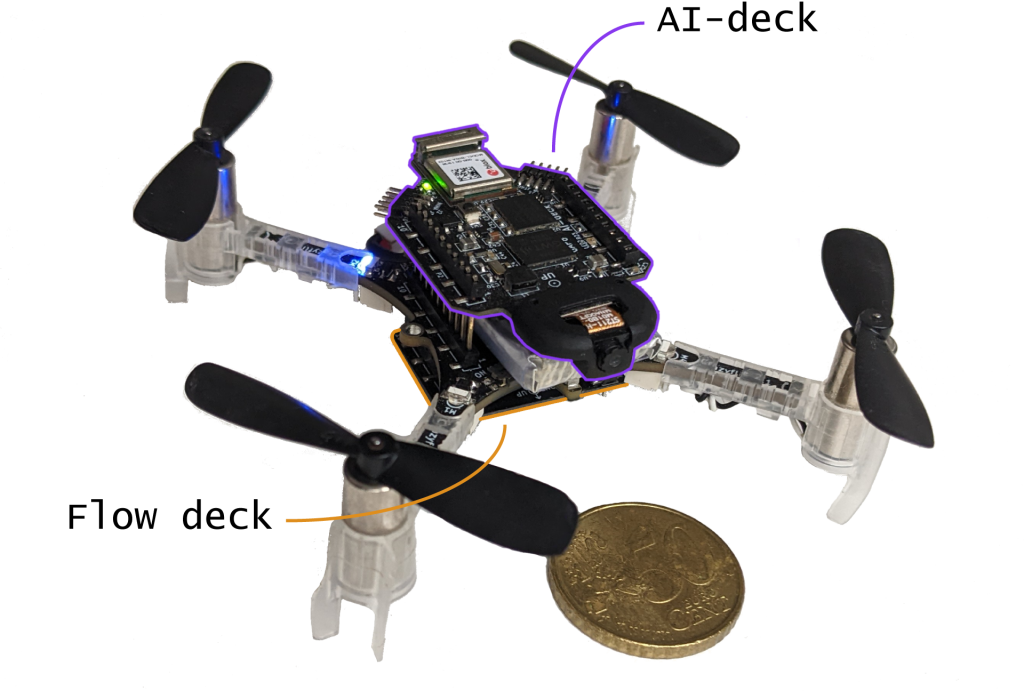

Furthermore, we encountered approximately three papers related to the AIdeck. These papers covered various topics such as optical flow detection [17], visual pose estimation [21], and the detection of other Crazyflies [5]. During the conference, we heard that the AIdeck presents certain challenges for researchers, but we remain hopeful that we will see more papers exploring its potential in the future!

List of papers

This list not only physical Crazyflie papers, but also papers that uses simulation or parameters of the Crazyflie. This time the workshop papers are not included but we’ll add them later once we have the time

Enjoy!

- ‘A Micro Aircraft with Passive Variable-Sweep Wings’ Songnan Bai, Runze Ding, Pakpong Chirarattananon from City University of Hong Kong

- ‘Onboard Controller Design for Nano UAV Swarm in Operator-Guided Collective Behaviors’ Tugay Alperen Karagüzel, Victor Retamal Guiberteau, Eliseo Ferrante from Vrije Universiteit Amsterdam

- ‘Multi-Target Pursuit by a Decentralized Heterogeneous UAV Swarm Using Deep Multi-Agent Reinforcement Learning’ Maryam Kouzehgar, Youngbin Song, Malika Meghjani, Roland Bouffanais from Singapore University of Technology and Design [Video]

- ‘Inverted Landing in a Small Aerial Robot Via Deep Reinforcement Learning for Triggering and Control of Rotational Maneuvers’ Bryan Habas, Jack W. Langelaan, Bo Cheng from Pennsylvania State University [Video]

- ‘Ultra-Low Power Deep Learning-Based Monocular Relative Localization Onboard Nano-Quadrotors’ Stefano Bonato, Stefano Carlo Lambertenghi, Elia Cereda, Alessandro Giusti, Daniele Palossi from USI-SUPSI-IDSIA Lugano, ISL Zurich [Video]

- ‘A Hybrid Quadratic Programming Framework for Real-Time Embedded Safety-Critical Control’ Ryan Bena, Sushmit Hossain, Buyun Chen, Wei Wu, Quan Nguyen from University of Southern California [Video]

- ‘Distributed Potential iLQR: Scalable Game-Theoretic Trajectory Planning for Multi-Agent Interactions’ Zach Williams, Jushan Chen, Negar Mehr from University of Illinois Urbana-Champaign

- ‘Scalable Task-Driven Robotic Swarm Control Via Collision Avoidance and Learning Mean-Field Control’ Kai Cui, MLI, Christian Fabian, Heinz Koeppl from Technische Universität Darmstadt

- ‘Multi-Agent Spatial Predictive Control with Application to Drone Flocking’ Andreas Brandstätter, Scott Smolka, Scott Stoller, Ashish Tiwari, Radu Grosu from Technische Universität Wien, Stony Brook University, Microsoft Corp, TU Wien [Video]

- ‘Trajectory Planning for the Bidirectional Quadrotor As a Differentially Flat Hybrid System’ Katherine Mao, Jake Welde, M. Ani Hsieh, Vijay Kumar from University of Pennsylvania

- ‘Forming and Controlling Hitches in Midair Using Aerial Robots’ Diego Salazar-Dantonio, Subhrajit Bhattacharya, David Saldana from Lehigh University [Video]

- ‘AMSwarm: An Alternating Minimization Approach for Safe Motion Planning of Quadrotor Swarms in Cluttered Environments’ Vivek Kantilal Adajania, Siqi Zhou, Arun Singh, Angela P. Schoellig from University of Toronto, Technical University of Munich, University of Tartu [Video]

- ‘Decentralized Deadlock-Free Trajectory Planning for Quadrotor Swarm in Obstacle-Rich Environments’ Jungwon Park, Inkyu Jang, H. Jin Kim from Seoul National University

- ‘A Negative Imaginary Theory-Based Time-Varying Group Formation Tracking Scheme for Multi-Robot Systems: Applications to Quadcopters’ Yu-Hsiang Su, Parijat Bhowmick, Alexander Lanzon from The University of Manchester, Indian Institute of Technology Guwahati

- ‘Safe Operations of an Aerial Swarm Via a Cobot Human Swarm Interface’ Sydrak Abdi, Derek Paley from University of Maryland [Video]

- ‘Direct Angular Rate Estimation without Event Motion-Compensation at High Angular Rates’ Matthew Ng, Xinyu Cai, Shaohui Foong from Singapore University of Technology and Design

- ‘NanoFlowNet: Real-Time Dense Optical Flow on a Nano Quadcopter’ Rik Jan Bouwmeester, Federico Paredes-valles, Guido De Croon from Delft University of Technology [Video]

- ‘Adaptive Risk-Tendency: Nano Drone Navigation in Cluttered Environments with Distributional Reinforcement Learning’ Cheng Liu, Erik-jan Van Kampen, Guido De Croon from Delft University of Technology

- ‘Relay Pursuit for Multirobot Target Tracking on Tile Graphs’ Shashwata Mandal, Sourabh Bhattacharya from Iowa State University

- ‘A Distributed Online Optimization Strategy for Cooperative Robotic Surveillance’ Lorenzo Pichierri, Guido Carnevale, Lorenzo Sforni, Andrea Testa, Giuseppe Notarstefano from University of Bologna [Video]

- ‘Deep Neural Network Architecture Search for Accurate Visual Pose Estimation Aboard Nano-UAVs’ Elia Cereda, Luca Crupi, Matteo Risso, Alessio Burrello, Luca Benini, Alessandro Giusti, Daniele Jahier Pagliari, Daniele Palossi from IDSIA USI-SUPSI, Politecnico di Torino, Università di Bologna, University of Bologna, SUPSIETH Zurich [Video]

- ‘Multi-Robot Pickup and Delivery Via Distributed Resource Allocation’ Andrea Camisa, Andrea Testa, Giuseppe Notarstefano from Università di Bologna [Video]

- ‘Multi-Robot 3D Gas Distribution Mapping: Coordination, Information Sharing and Environmental Knowledge’ Chiara Ercolani, Shashank Mahendra Deshmukh, Thomas Laurent Peeters, Alcherio Martinoli from EPFL

- ‘Finding Optimal Modular Robots for Aerial Tasks’ Jiawei Xu, David Saldana from Lehigh University

- ‘Statistical Safety and Robustness Guarantees for Feedback Motion Planning of Unknown Underactuated Stochastic Systems’ Craig Knuth, Glen Chou, Jamie Reese, Joseph Moore from Johns Hopkins University, MIT

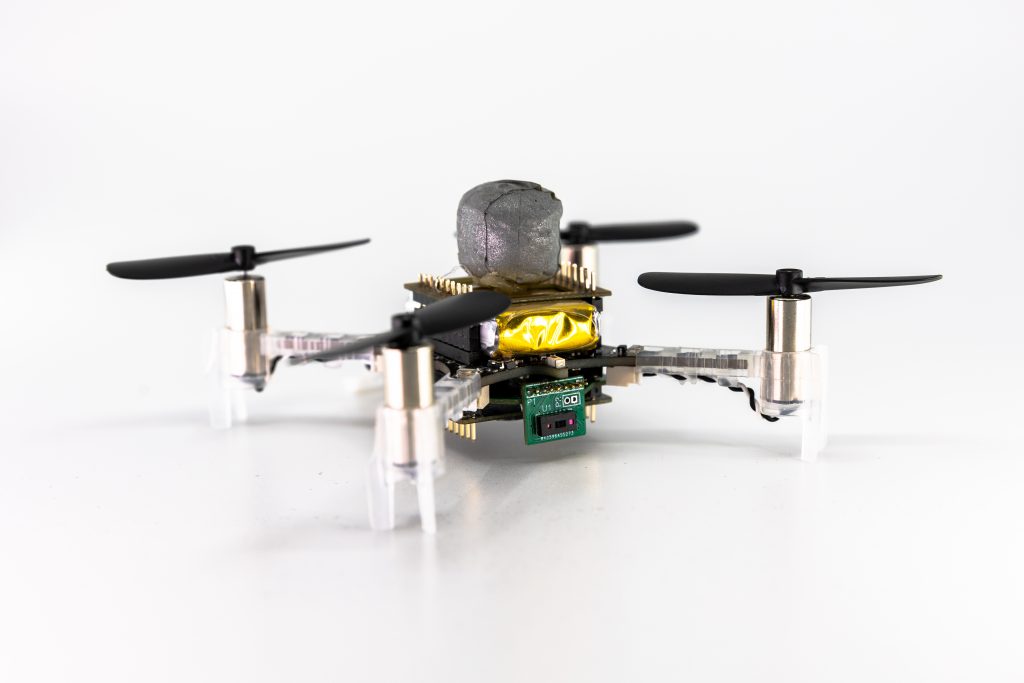

- ‘Spring-Powered Tether Launching Mechanism for Improving Micro-UAV Air Mobility’ Felipe Borja from Carnegie Mellon university

- ‘Reinforcement Learning for Safe Robot Control Using Control Lyapunov Barrier Functions’ Desong Du, Shaohang Han, Naiming Qi, Haitham Bou Ammar, Jun Wang, Wei Pan from Harbin Institute of Technology, Delft University of Technology, Princeton University, University College London [Video]

- ‘Safety-Critical Ergodic Exploration in Cluttered Environments Via Control Barrier Functions’ Cameron Lerch, Dayi Dong, Ian Abraham from Yale University